What is AWS CodePipeline?

AWS CodePipeline is a fully managed continuous delivery service that helps you automate your release pipeline. It allows users to build, test and deploy code into a test or production environment using either the AWS CLI or a clean UI configuration process within the Amazon Console.

Key takeaways

- CodePipeline is highly configurable and has a very short learning curve.

- You must configure IAM roles to ensure that those who need access have it and those who don’t are restricted.

- AWS CodePipeline can integrate with tools and services, like GitHub and Jenkins.

The benefits of using Amazon Web Services CodePipeline

AWS CodePipeline leverages many of the management tools already in the AWS environment, such as AWS CodeCommit, AWS CodeStar, Amazon ECR, AWS Identity, Amazon ECS, AWS CDK, Amazon EC2 instance, AWS Management Console, Amazon Linux, AWS Step Functions and AWS CodeDeploy. It does not limit itself to aggregating only internal services. Users can also create integrations with tools and services like GitHub and Jenkins.

Click here to learn more about AWS CodePipeline vs. Jenkins.

Amazon Web Services CodePipeline is highly configurable and has a very short learning curve. Those familiar with the Amazon ecosystem will find it extremely easy to create a CICD pipeline for their applications and services.

Figure 1. The complete pipeline is executed in response to a code change.

Setting up your new pipeline in AWS CodePipeline

To use S3 as the source, you’ll need to provide a bucket name for an S3 bucket with versioning enabled. Amazon CodeCommit and GitHub are similar, but let’s look at how to integrate them in a little more detail so you know what to expect. When you select an option, the appropriate fields appear below the selection.

CodeCommit and CodePipeline

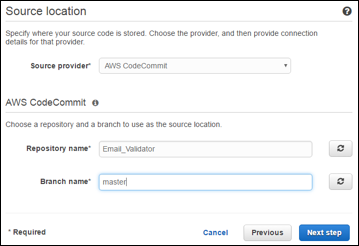

In this example, I have a CodeCommit repository set up under the name, Email_Validator, and I will use the master branch as the source for the pipeline.

Fig. 3 Setting the Source to a CodeCommit Repository

GitHub and CodePipeline

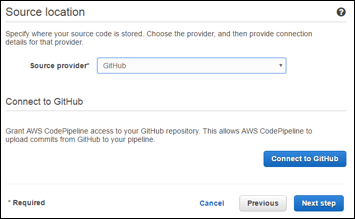

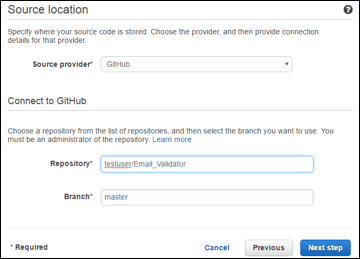

The AWS CodePipeline integration with GitHub is relatively simple as well. After selecting GitHub as the source provider, click on the Connect to GitHub button.

Figure 4a Setting the Source to a GitHub Repository

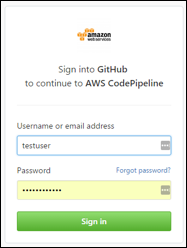

To connect to GitHub, you’ll need to authenticate to your GitHub account. Your GitHub credentials should be entered here, not your AWS credentials.

Authenticating to GitHub

Authenticating to GitHub

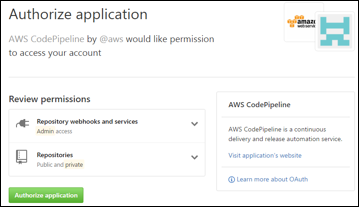

Once you’ve authenticated to GitHub, you’ll need to review and authorize permissions for AWS to have admin access to Repository webhooks and services and all public and private repositories. Clicking the Authorize application button will direct you to the AWS CodePipeline process to complete the integration.

Figure 4c. Reviewing and Authorizing AWS Access to your GitHub Account

Finally, you’ll need to select the repository and branch to use as your source. Fortunately, the integration allows AWS to auto-populate the text boxes, so you don’t have to do too much additional typing.

Figure 4d. Identifying the GitHub Repository and Branch as the Source

Now that we have a source, it’s time to work on the build process.

Defining the build process for your pipeline

The next step in creating the pipeline is to define the build provider. At the time of this writing, AWS offers three options for build providers:

- AWS CodeBuild

- Jenkins

- Solano CI

Not sure which to use? Check out AWS CodePipeline vs. Jenkins CI Server.

Let’s consider the configuration to add either AWS CodeBuild or Jenkins.

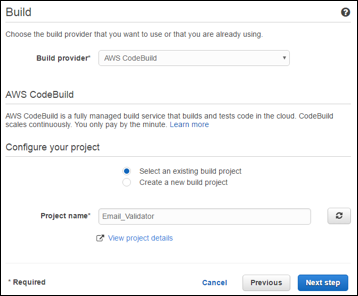

AWS CodeBuild integration

For this example, I already have a CodeBuild project called Email_Validator. Suppose you already have a build project configured. In that case, you can select AWS CodeBuild as the Build provider, click the radio button to Select an existing build project, and then choose a project name from the list, which will appear in the Project name box.

If you like, you can also define a new CodeBuild project as part of this process. It requires the same inputs as setting up a CodeBuild project independently in the AWS environment, but that is beyond the scope of this article

Figure 5. Configure the Build to use a CodeBuild Project

Jenkins integration

Adding Jenkins as part of the pipeline is useful for builds configured to run on Jenkins or have a Jenkins server running on another EC2 instance.

However, suppose you’re new to the system. In that case, you will have to set up and configure the Jenkins server and build the appropriate build jobs. You will also have to ensure that the following prerequisites are in place for CodePipeline to connect to and interact with Jenkins.

Before connecting Jenkins as the build step in the pipeline, ensure that you have the following set up and configured:

- The IAM Instance role allows communication between CodePipeline and the instance of Jenkins.

- Ruby gems, Rake and Haml are installed on the instance which hosts the Jenkins service, and environment variables will allow Rake commands to execute from the command line.

- The AWS CodePipeline Plugin for Jenkins installs on the Jenkins service.

Additionally, it should configure the build job with a build trigger that polls the AWS CodePipeline each minute. A post-build action that uses the AWS CodePipeline Publisher uses the identical Provider name defined in the Build step.

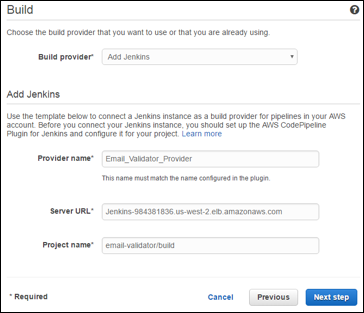

Once each item is set up and configured, you can include the Jenkins server as part of the pipeline. Start by selecting Add Jenkins for the Build provider selection box. Enter a Provider name, which needs to be identical to the Provider name included in the post-build action of the Jenkins job. It doesn’t matter which one you set first, as long as the other matches exactly.

Finally, enter the Server URL and the project’s name to be executed.

Figure 6. Configure the Pipeline to Build the Project with a Jenkins Job

Deploying the build with CodePipeline

With our project built, the next step to configure in the pipeline is to deploy an environment for testing or production use. You can select No Deployment if you want CodePipeline only to build your project. AWS CodePipeline offers three deployment options:

If you are more familiar with and typically use AWS CloudFormation to manage your environment, Amazon provides detailed instructions here.

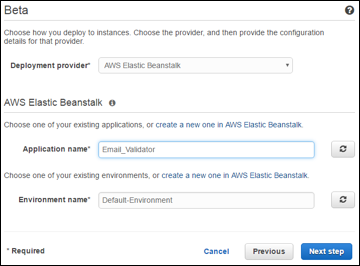

AWS Elastic Beanstalk deployment

AWS Elastic Beanstalk is the easiest way to deploy and manage an application in AWS. Application code is uploaded, provides the basic configuration, and AWS uses its Auto Scaling and Elastic Load Balancing to create and maintain an environment that will scale based on need.

To include deployment of your project using Elastic Beanstalk, you need to have an environment configured in AWS. You provide the application name and environment name to CodePipeline to integrate it.

Figure 7. Deploy Your Project into an Elastic Beanstalk Environment

AWS CodeDeploy deployment

AWS CodeDeploy is a service that enables an application’s automated and coordinated deployment into an environment. Two deployment types are available.

In-place deployment identifies all instances that host the application, systematically takes each offline, replaces the application, and then brings each back online on the same collection of instances.

Blue-green deployment creates a new environment with new instances. The new applications load onto the new instance. After an optional wait time for verification and testing, an elastic load balancer (ELB) begins rerouting traffic from the original environment to the new environment.

Additional information on AWS CodeDeploy, including how to create and configure new deployments, can be found here.

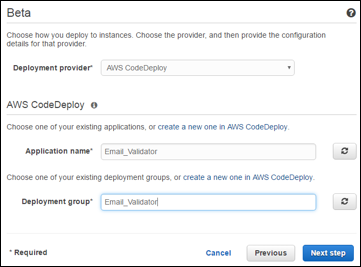

To integrate CodeDeploy into the pipeline, you’ll need to create a new deployment and then enter the Application name and Deployment group to integrate it into the pipeline.

Figure 8. Using CodeDeploy to Deploy Instances of your Application

Determining appropriate access for the pipeline

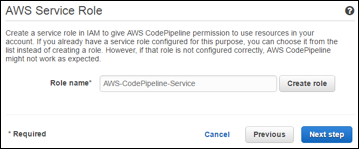

Once configured, the pipeline will need access to various services and tools within the AWS environment. Fortunately, the pipeline creation process makes this easy to handle. Once you’ve configured the source, build and deployment configurations for your pipeline, you can select how to execute the pipeline.

If you’ve previously defined other pipelines, you may already have a role defined, in which case you can enter it directly. If that is not the case, a Create role button will analyze the pipeline and determine the necessary policies for successful execution.

Once you configure the service role, you’ll have the opportunity to review and create your pipeline configuration. Below is the summary of the pipeline configured as part of this walkthrough. Your pipeline may be similar. Clicking on Create pipeline will create the pipeline and begin its first execution.

Figure 9. Creating and Setting the Service Role for the Pipeline

Sumo Logic: AWS monitoring plus multi-cloud support

Sumo Logic’s multi-cloud SaaS analytics platform has integrations for major cloud service providers like Google Cloud, Microsoft Azure and AWS, which continue to take hold as cloud adoption grows.

With Sumo Logic’s AWS monitoring capability, users benefit from deep integration with the AWS platform and security services. Sumo Logic’s log aggregation capabilities, machine learning and pattern detection, make it easy for enterprise organizations to gain visibility into AWS deployments, manage application performance, maintain cloud security and comply with internal and external standards. If you are already working with AWS or considering leveraging it to amplify your business results, consider Sumo Logic for deep insights into your application performance and security.